Cultural fads, by definition, come and go. There were bowl cuts in the 70s, low rise jeans in the 90s, even fidget spinners recently had their time in the spotlight. But when scientific communities start embracing or generating fad solutions to complex problems, everyone may be in for trouble. At least that’s what Jesse Singal believes. WellWell recently spoke with the author of The Quick Fix: Why Fad Psychology Can’t Cure Our Social Ills, about the longstanding tradition of scientific bias, trendy behavioral psychology and what we’re often overlooking.

The Quick Fix aims to expose holes in recent behavioral science trends which you coin as fad psychology, arguing most will never be enough to truly address social injustice or inequality. What are some recent examples and how are they failing despite their popularity?

I think a reason why they’re popular would be the Implicit Association Test. It’s a test that can supposedly reveal people’s unconscious bias and because it’s such a provocative idea, it appears to have become a general mainstay of diversity training across the country. But there’s never been strong evidence the test actually measures anything useful, that it actually measures a propensity to act in a biased way and at this point, the creators of the test have acknowledged that it’s too statistically noisy to be used to diagnose individuals. So, it’s strange how this whole national conversation about racism has been rerouted because of this very sexy test that doesn’t have any sort of proven interventions behind it that actually lead to better outcomes.

Why do you think that this has gained so much traction? Are people just so starved for some action to be taken when facing daunting issues?

Yeah, that’s true on both the personal end and institutional level. At the personal level, taking the IAT, you can feel like you’re part of this struggle against racial inequality and who wouldn’t want to be a part of that struggle? So, there’s an element of consciousness raising or looking inside of your own soul, really helped by technology. At the institutional level, we don’t really know what works in terms of diversity training, truthfully there’s not a lot of great options. So, if you’re the head of HR and you have a prominent Harvard psychologist saying, ‘this new computer tool is the best we’ve got’, I wouldn’t really blame that HR person for falling for that narrative. I think it’s understandable why they would.

What is the alternative then because obviously this is a huge issue? Are they doing more harm than good or are they just not effective?

I think part of the more harm than good approach risks that it will misdiagnose people and it is not ethical to tell people they are implicitly biased when we’re not telling them that the test is really noisy. Mostly though, it’s just the opportunity cost because we’re spending millions of dollars on implicit bias interventions. But when it comes to racially discriminatory outcomes some part of the pie is implicit bias, some part of the pie is explicit bias. There’s also sort of structural racism which is much deeper rooted and harder to address. So yes, I think that there’s harm in that we’re sort of chasing one aspect of this because we happen to have this sexy tool that supposedly measures that and losing sight of the bigger picture.

When people have failed this test or it determines that they have a bias, what has been the fallout? Are people losing their jobs?

Those are the sort of dystopian scenarios that critics over time have raised. I am not aware, yet, of any stories that had someone either losing their job or anything like that.

You mention in the book a huge part of this issue is the replication crisis. What role does that play?

The replication crisis reflects that there are a lot of subtle ways science can go wrong and a lot of shoddy methodological tools that scientists have embraced over the years, often unintentionally. Basically, what happened around 2010 is, there were some signs that a lot of the studies in scientific literature might not be up to snuff and researchers started in earnest trying to replicate that. And the theory is, if you can do the same study and get the same result, that’s a good sign that you’re looking at a real fact. What happened instead, depending on the subfield of psychology, is that the replication rate is only about 50 percent or so, sometimes lower so that means vast loss of published research, points to common findings that might not be real. It really kicked the legs out of psychology, particularly social psychology.

Are there any bias going into these studies, even regarding who may be developing them or paying for them?

I think in some cases yes, but I also think it’s bigger than that. It’s human nature and the way scientific journals are structured. For a long time, most journals would not publish null results, meaning if you wanted to get published, you’d need to find an effect. But it’s just as useful to look for an ineffective study because you’re adding to the scientific knowledge. But until recently, that would be a very hard thing to get published. So, I think there are some cases where this is, generally old school corruption based on who’s funding the study. I also think it’s a little bit of more than that but still a big problem.

Is part of this that there’s currently a lack of sensible contrarians? Most are only known for saying things that are obviously problematic.

Yeah, I think contrarianism has an image problem right now. There’s a type of contrarianism that I’m not a fan of where you’re basically just yelling offensive stuff because you can and for the sake of it. But I think what I’m trying to do is a different form of contrarianism where you’re hopefully, making a substantive argument pointing out that what most people think about something is off base and we’re losing something by holding on to this false belief just because it’s politically expedient or trendy right now.

The Quick Fix notes that the simplest of half-baked ideas are the ones that tend to catch on and gain momentum primarily because the brain has an easier time comprehending them. How do we get out of this? How do we combat something that might be human nature?

It’s really tough. I mean, I can give you a one sentence account of how I can make soldiers more resilient and resistant to PTSD, you’ll remember that account. You will not remember when I explain to you the slightly more complicated process of actually treating PTSD. I don’t know the answer to that. I’m basically like a complexity monger. I like to say it’s complicated to readers and listeners over and over and over. I think the greater complexity, humility and nuance, the better we’ll be. But there will always be demagogues and overplayed scientists and pundits who try to offer up simple solutions. So, my book is just an attempt to like nudge them a tiny bit into the right direction on that front.

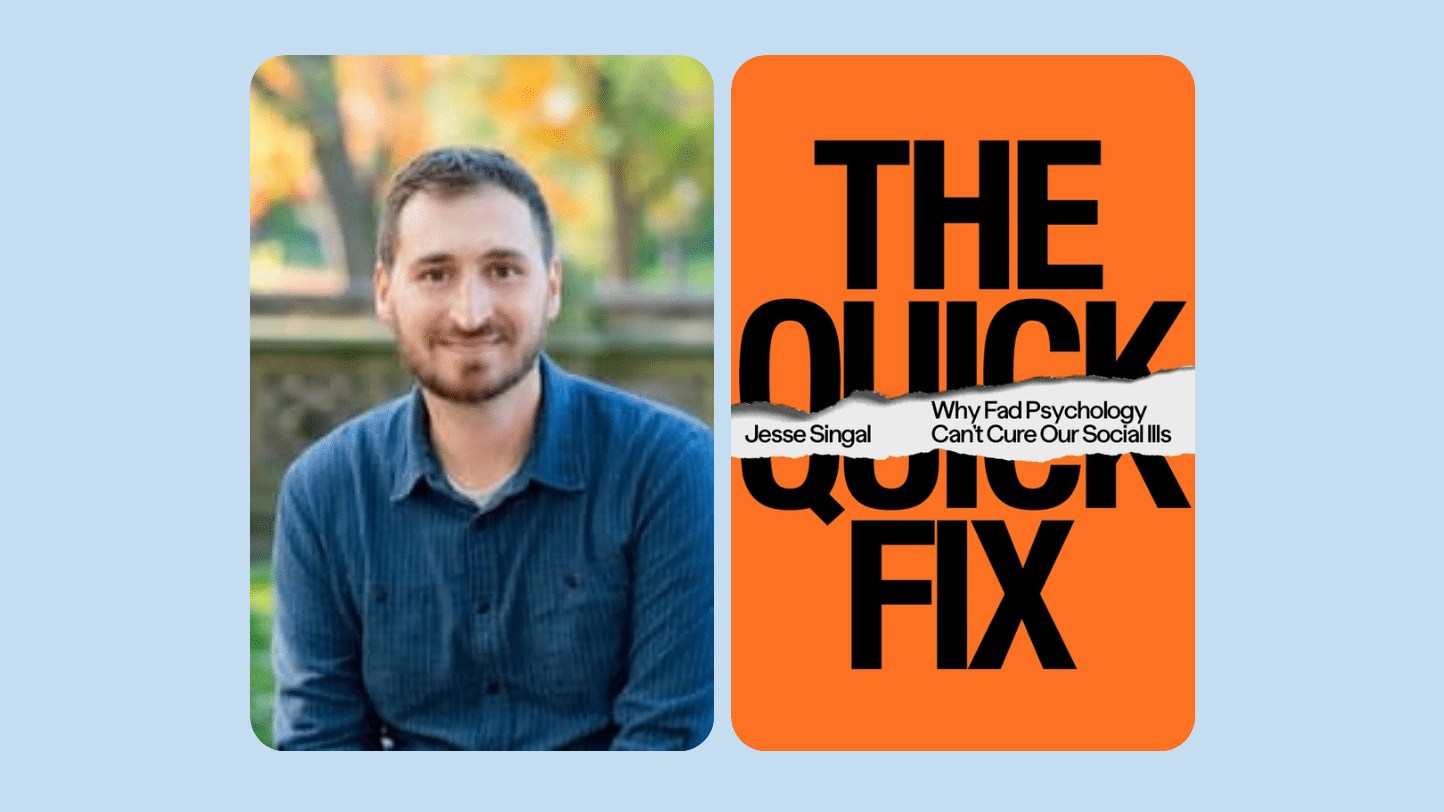

About Jesse Singal

About Jesse Singal

Jesse Singal is a Brooklyn-based writer who is a contributing writer at New York Magazine, where he was formerly a senior editor and writer-at-large. He is the cohost of the podcast Blocked and Reported, and has written for The New York Times, The Atlantic, The Boston Globe, and other outlets on subjects ranging from psychology’s replication crisis to the strangest corners of internet culture to PTSD and youth gender dsyphoria. His first book, The Quick Fix: Why Fad Psychology Can’t Cure Our Social Ills, is out now.

Learn More

www.jessesingal.com

Twitter @jessesingal